Chris Niccolls julkaisi hiljattain “rakkauskirjeen” vanhalle Panasonic GM5 -kameralle (linkki). Monet hänen esiin nostamistaan seikoista – pieni koko, yksinkertaisuus, riittävän hyvä kuvanlaatu ja käytettävyys – ovat sellaisia jotka voisin liittää myös pariin omaan kameraani, kuten kuvan Sony RX100 (2012) ja Canon EOS M50 -malliin (2018). Ja kuten Chris, en ottaisi näitä kameroita lintukuvausretken kalustoksi. Valokuvaus voi kuitenkin olla monia asioita, eikä huippuluokan nopeus, kuvanvakautus tai pikselimäärä ole kaikissa tilanteissa olennaisinta.

Näin kesällä on ilo liikkua kevyen kamera-linssiyhdistelmän kanssa, syventyä maisemiin, katuvalokuvaukseen tai tutkimaan pienten yksityiskohtien makro-maailmaa. Kaikkiin näihin vanhempikin kamera taipuu oikein hyvin – ehkä jopa paremmin kuin uusi, tehokas ja hyvin kookas täyden kennon järjestelmäkamera ja sen jättimäinen, huippuvalovoimainen linssi.

Sonyn laadukas RX100 taskukamera on kokenut uuden polven valokuvaharrastajien keskuudessa eräänlaisen renessanssin (tai voidaan puhua kameraperheestä – Sony on julkaissut kaikkiaan seitsemän sukupolvea RX100-kameroita, uusin on vuodelta 2019). Kannattaa tehdä RX100-mallinimellä vaikka YouTube-haku. Ilmiössä lienee yhteyksiä yleisempään teknologian retro-nostalgiaan, sekä erityisesti valokuvauksen yksinkertaisuutta, spontaanisuutta ja pelkistettyä luonnetta painottavaan, viimeisimmän teknologian vastareaktioon, joka on vahvistunut viime vuosina. Analogisten filmikameroiden harrastus on kasvattanut suosiotaan jo aiemmin, mutta nyt myös yli vuosikymmenen ikäiset digipokkarit ovat myös “muodissa”.

RX100:n ytimessä on taskukameralle kunnianhimoinen, tuuman CMOS Exmor-kamerakenno, jonka resoluutio on 20,2 megapikseliä. RX100 mahdollistaa myös aidon RAW-kuvauksen ja vanhaksi taskukameraksi siinä on sangen hyvä kuvanlaatu. Silti itse huomaan että tämä kamera jää nopeissa arjen kuvaustilanteissa pahiten älypuhelimen kameran varjoon: vaikka käyttämäni iPhone 15 Pro Max -puhelimen linssit ja kenno eivät fyysisesti pärjää Sonyn Exmor-kennon ja Carl Zeiss Vario-Sonnar T* -zoomlinssin (f/1.8-f/4.9, 28–100 mm täyskennovastaavuus) ominaisuuksille, pystyy nykyaikainen älypuhelin pitkälti ylittämään fyysiset rajoitukset tehokkaan laskennallisen kuvankäsittelyn keinoin. iPhonen iso ja kirkas OLED- kosketusnäyttö on myös käytettävyyden kannalta aivan toisesta maailmasta kuin Sonyssä käytetty pieni ja nykystandardeilla suhteellisen himmeä LCD-näyttö (RX100-kamerassa ei ole erillistä optista etsintä). Käytän kuitenkin Sonyä edelleen säännöllisesti etenkin mustavalkokuvaukseen; kameran pieni koko ja “tulitikkuaskiluokan” näyttö tuntuvat sopivan siihen maailmaan parhaiten. Seuraavana tuossa suunnassa on sitten pieniä fyysisiä paperikuvia tuottava, mutta myös hybridikamerana toimiva Fujifilm Instax Mini Evo, josta voisin joskus kirjoittaa enemmänkin.

Canon M50 on itselläni paljon enemmän arkikäytössä, ja siihen on keskeisenä syynä että kyseessä on kompakti, vaihdettavalla linssillä varustettu peilitön järjestelmäkamera. Itselläni on viisi vuosien varrella hankittua, Canonin alkuperäistä M-sarjan linssiä (plus tukku adapterilla toimivia vanhoja EF-sarjalaisia, plus kolmansien osapuolten linssejä): EF-M 15-45mm f/3.5-6.3 STM IS (kittilinssi), EF-M 22mm f/2 STM, EF-M 28mm f/3.5 Macro IS STM, EF-M 32mm f/1.4 STM, ja EF-M 55-200mm f/4.5-6.3 IS STM (kannattaa muuten huomata, että M50 on APS-C-kennokoon kamera, ja tulkita nuo aukot ja polttovälit suhteessa siihen). Kolmansien osapuolten linsseistä kannattanee mainita ainakin manuaalitarkenteinen Laowa “CA-Dreamer” 65mm f/2.8 2x Ultra Macro APO (linkki Ken Rockwellin artikkeliin). Tämä ultra-makro kuitenkin tarvitsee käytännössä aina lisävaloa, salamatekniikkaa ja mieluiten myös jämäkkää makrokuvauskiskoa, jolla pinoamiseen (focus stacking) tarvittavat kuvat voidaan kohdistaa millintarkasti eri syvyyksille. Yleensä minulle riittää EF-M 28mm f/3.5 Macro IS STM, missä on myös pieni, rengastyyppinen lisävalo sisäänrakennettuna linssin kärkeen.

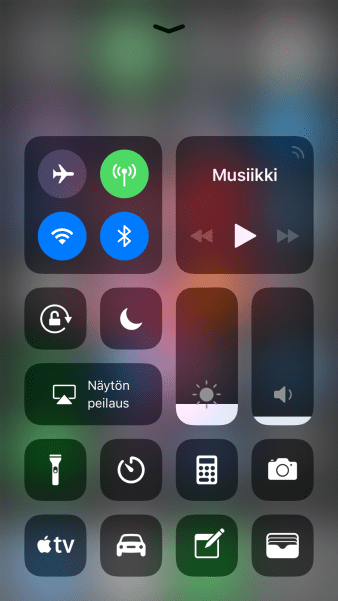

Se, missä myös tämä kompakti järjestelmäkamera edelleen häviää älypuhelimella kuvaamiselle, on kuvien muokkaamisen, siirtämisen ja julkaisemisen vaivattomuus. M50 on periaatteessa mahdollista kytkeä langattomasti älypuhelimeen ja siirtää kuvat sitä kautta muokattavaksi ja eteenpäin, mutta olen kokenut tuon langattoman yhdistämisen (ja Canonin Camera Connect -mobiilisovelluksen) niin hitaaksi ja epäluotettavaksi, että yleensä odotan suosiolla että pääsen kotiin ja siirrän kaikki kuvat kerralla muistikortin lukijan avulla tietokoneelle jatkokäsittelyyn.

Chris Niccolls vetoaa videonsa lopussa kameravalmistajiin, että nämä alkaisivat uudelleen tuottaa Panasonic GM5:n tyyppisiä, laadukkaita ja valokuvaukseen suunniteltuja kompakteja järjestelmäkameroita, mielellään modernilla tekniikalla päivitettyinä. Itse lisäisin hänen toivelistaansa ennen kaikkea paljon nykyistä nopeaman ja luotettavamman langattoman kuvien siirron. Hienoa olisi tietysti, jos kamerassa itsessään olisi (mobiili)käyttöjärjestelmä joka tukisi julkaisemista suosituimpiin tiedostonjako-, valokuvaus- ja sosiaalisen median palveluihin. Mutta jo se, että kamera kykenisi aidosti “yhden napin tekniikalla” siirtämään kuvat älypuhelimeen auttaisi paljon. Mutta vanhojen kameroiden hitaudesta ja kömpelyydestä huolimatta aion itse edelleen jatkaa ainakin näiden parin kompaktikameran käyttöä. Esimerkiksi Canon M50:n laadukkaat ja kevyet linssit tarjoavat sellaista kuvanlaatua, mihin älypuhelimen laskennalliset tekniikat eivät edelleenkään taivu. Sony RX100 puolestaan tuo itselleni etenkin mustavalkokuvaukseen sellaisen konkreettisen, perinteiseen kameratyöskentelyyn liittyvän näppituntuman, mitä älypuhelimen virtuaalinappien hipelöinti ei vain korvaa.

You must be logged in to post a comment.