I got the first part of my ‘Transition to Mac’ project (almost) ready by the end of my summer vacation. This was focused around a Mac Mini (M1/16GB/512GB model), which I set up as the new main “workstation” for my home office and photography editing work. This is in nutshell what extras and customisations I have done to it, so far:

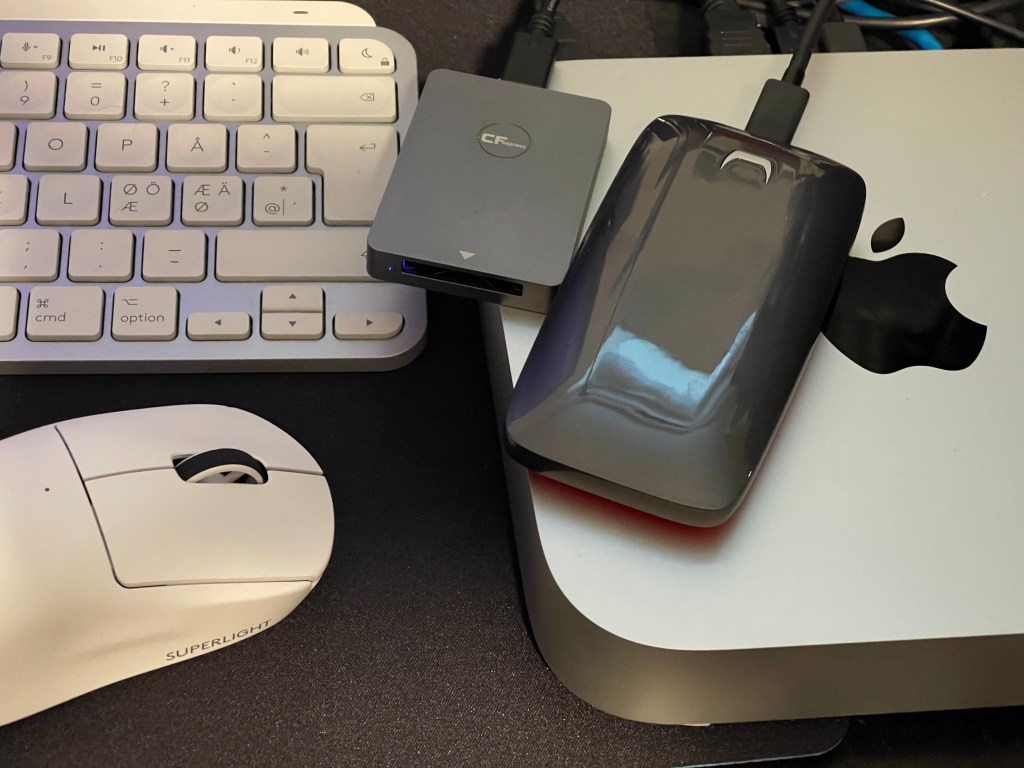

– set up as the keyboard Logitech MX Keys Mini for Mac

– and as the mouse, Logitech G Pro X Superlight Wireless Gaming Mouse, White

– for fast additional ssd storage, Samsung X5 External SSD 2TB (with nominal read/write speeds of 2,800/2,300 MB/s)

– and then, made certain add-ons/modifications to the MacOS:

– AltTab (makes alt-tab key-combo cycle through all open app windows, not only between applications, like cmd-tab)

– Alfred (for extending the already excellent Spotlight search to third-party apps, direct access to various system commands and other advanced functionalities)

– installed BetterSnapTool (for adding snap to sides / corners functionality into the MacOS windows management)

– set Sublime Text as the default text editor

– DockMate (for getting Win10-style app window previews into the Mac dock, without which I feel the standard dock is pretty useless)

– And then installing the standard software that I use daily (Adobe Creative Cloud/Lightroom/Photoshop; MS Office 365; DxO Pure RAW; Topaz DeNoise AI & Sharpen AI, most notably)

– The browser plugin installations and login procedures for the browsers I use is a major undertaking, and still ongoing.

– I use 1Password app and service for managing and synchronising logins/passwords and other sensitive information across devices and that speeds up the login procedures a bit these days.

– There was one major hiccup in the process so far, but in the end it was nothing to blame Mac Mini for; I got a colour-calibrated 27″ 4k Asus ProArt display to attach into the Mac, but there was immediately major issues with display being stuck to black when Mac woke from sleep. As this “black screen after sleep” issue is something that has been reported with some M1 Mac Minis, I was sure that I had got a faulty computer. But as I made some tests with several other display cables and by comparing with another 4k monitor, I was able to isolate the issue as a fault with the Asus instead. Also, there was a mechanical issue with the small plastic power switch in this display (it got repeatedly stuck, and had to be forcibly pried back in place). I was just happy being able to return this one, and ordered a different monitor, from Lenovo this time, as they had a special discount currently in place for a model that also has a built-in Thunderbolt dock – something that should be useful as the M1 Mac Mini has a rather small selection of ports.

– There has been some weird moments recently of not getting any image into my temporary, replacement monitor, too, so the jury is still out, whether there is indeed something wrong in the Mac Mini regarding this issue, also.

– I have not much of actual daily usage yet behind, with this system, but my first impressions are predominantly positive. The speed is one main thing: in my photo editing processes there are some functions that take almost the same time as in my older PC workstation, but mostly things happen much faster. The general impression is that I can now process my large RAW file collections maybe twice as fast as before. But there are some tools that obviously have already been optimised for Apple Silicon/M1, since they run lightning-fast. (E.g. Topaz Sharpen AI was now so fast that I didn’t even notice it running the operation before it was already done. This really changes my workflow.)

– The smooth integration of Apple ecosystem is another obvious thing to notice. I rarely bother to boot up my PC computers any more, as I can just use an iPad Pro or Mac (or iPhone), both wake up immediately, and I can find my working documents seamlessly synced and updated in whatever device I take hold of.

– There are some irritating elements in the Mac for a long-time Windows/PC user, of course, too. Mac is designed to push simplicity to a degree that it actually makes some things very hard for user. Some design decisions I simply do not understand. For example, the simple cut-and-paste keyboard combination does not work in a Mac Finder (file manager). You need to apply a modifier key (Option, in addition to the usual Cmd-V). You can drag files between folders with a mouse, but why not use the standard Command-V for pasting files. And then there are things like the (very important) keyboard shortcut for pasting text without formatting: “Option + Cmd + Shift + V”! I have not yet managed to imprint either of this kind of long combo keys into my muscle memory, and looking at the Internet discussions, many frustrated users seem to have similar issues with this kind of Mac “personality issues”. But, otherwise, a nice system!

You must be logged in to post a comment.